The ML gaze

Exploring our relationship with machine learning surveillance.

Tools used: JavaScript, Google Vision AI, HTML, CSS, Figm

Tools used: JavaScript, Google Vision AI, HTML, CSS, Figm

Is the gaze of machine learning different or familiar?

ML is always passively gazing at us.

As a woman of color, the patriarchal male gaze is always present in my life but I wondered what is the ML gaze like.

As a woman of color, the patriarchal male gaze is always present in my life but I wondered what is the ML gaze like.

Context

The project explores what it means to be gazed upon by machine learning in the days when these models are perpetually present.

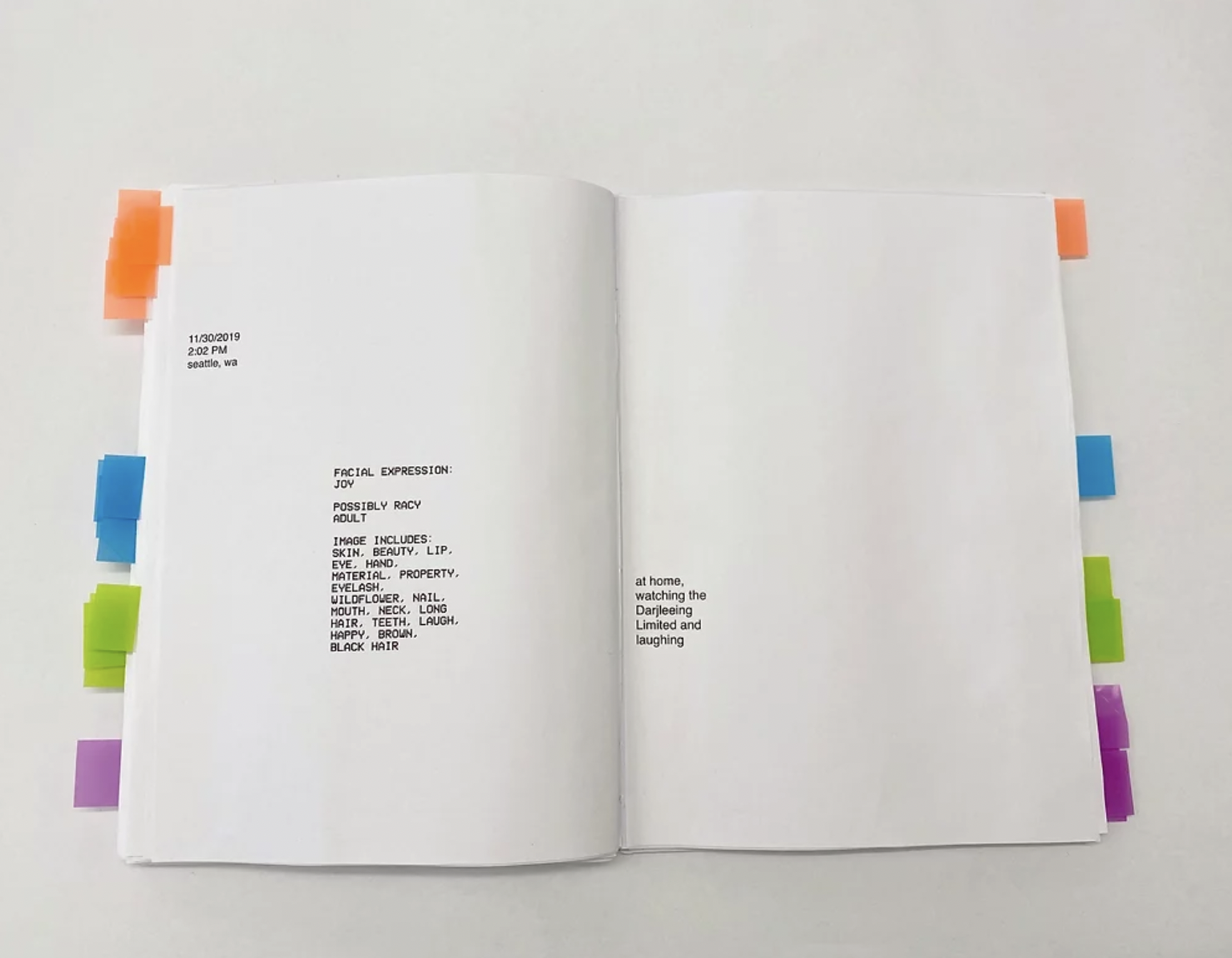

Google’s Vision is a set of machine learning models that analyze images for objects, facial expressions, and the type of content (if it is racy, adult, spoof, or medical).

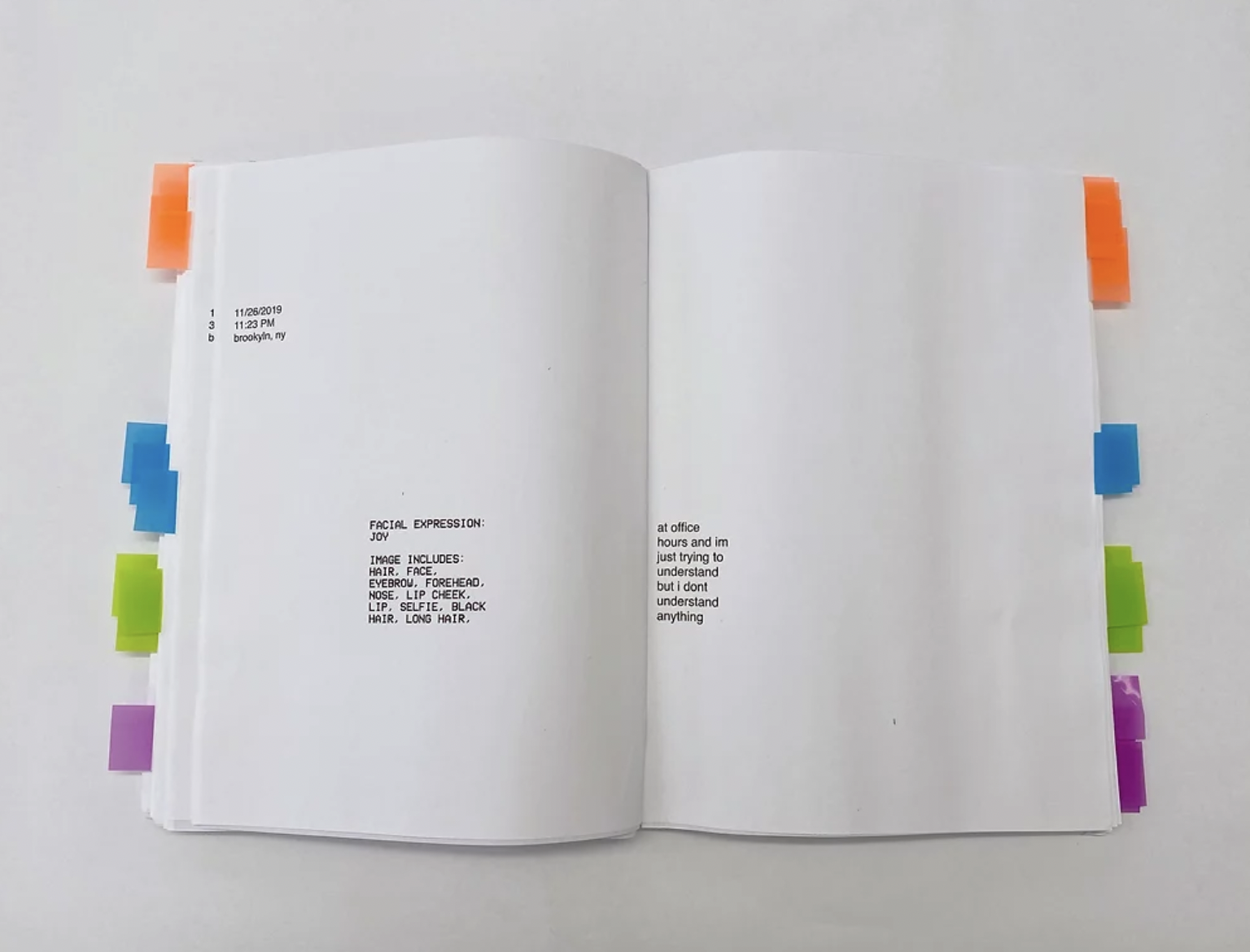

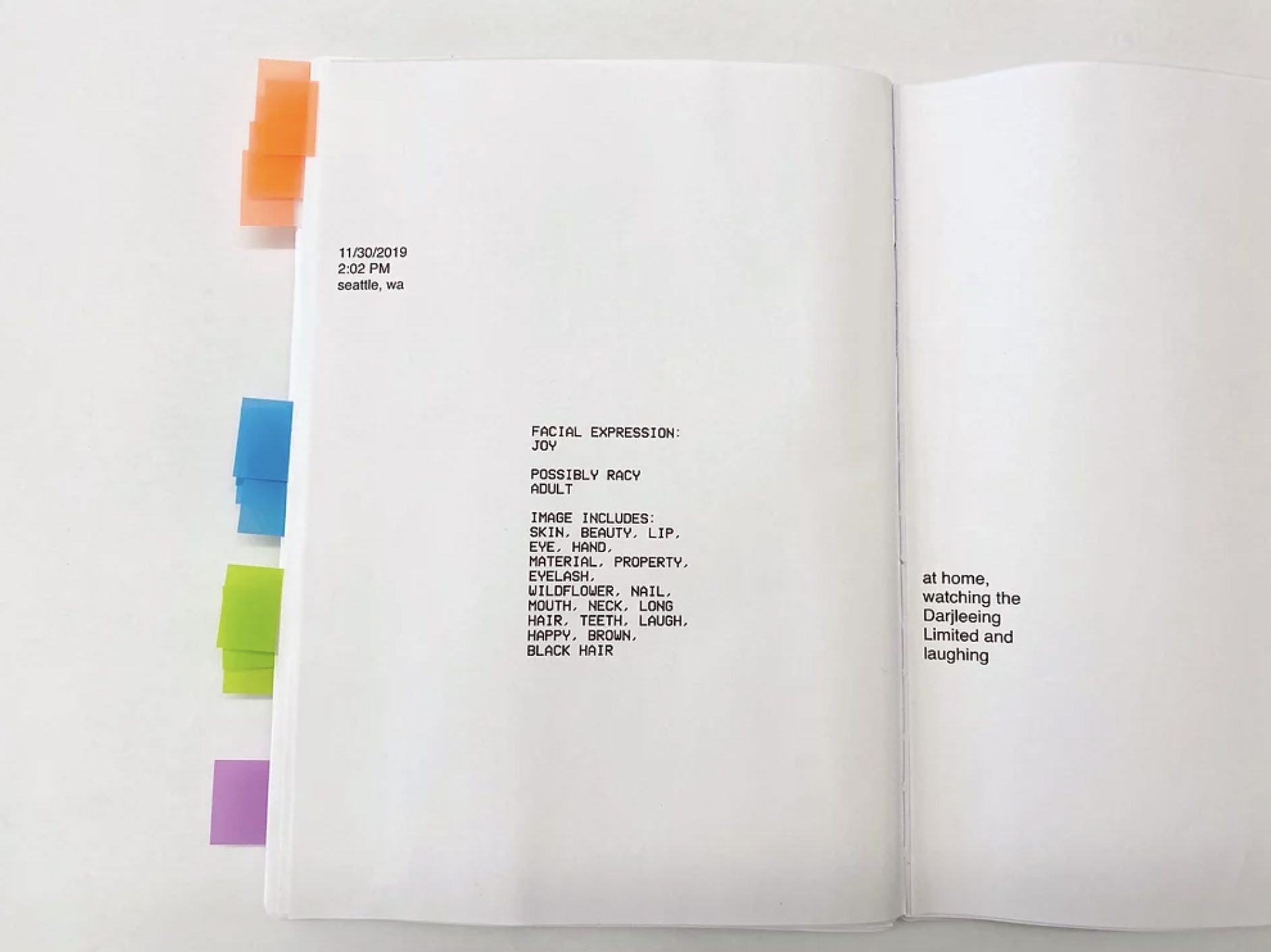

I had Google’s Vision watch me from my laptop for a month. At random points during each day, I would trigger Google's Vision models to record what it “saw”. At the same time, I would write down what I was actually doing at that moment.

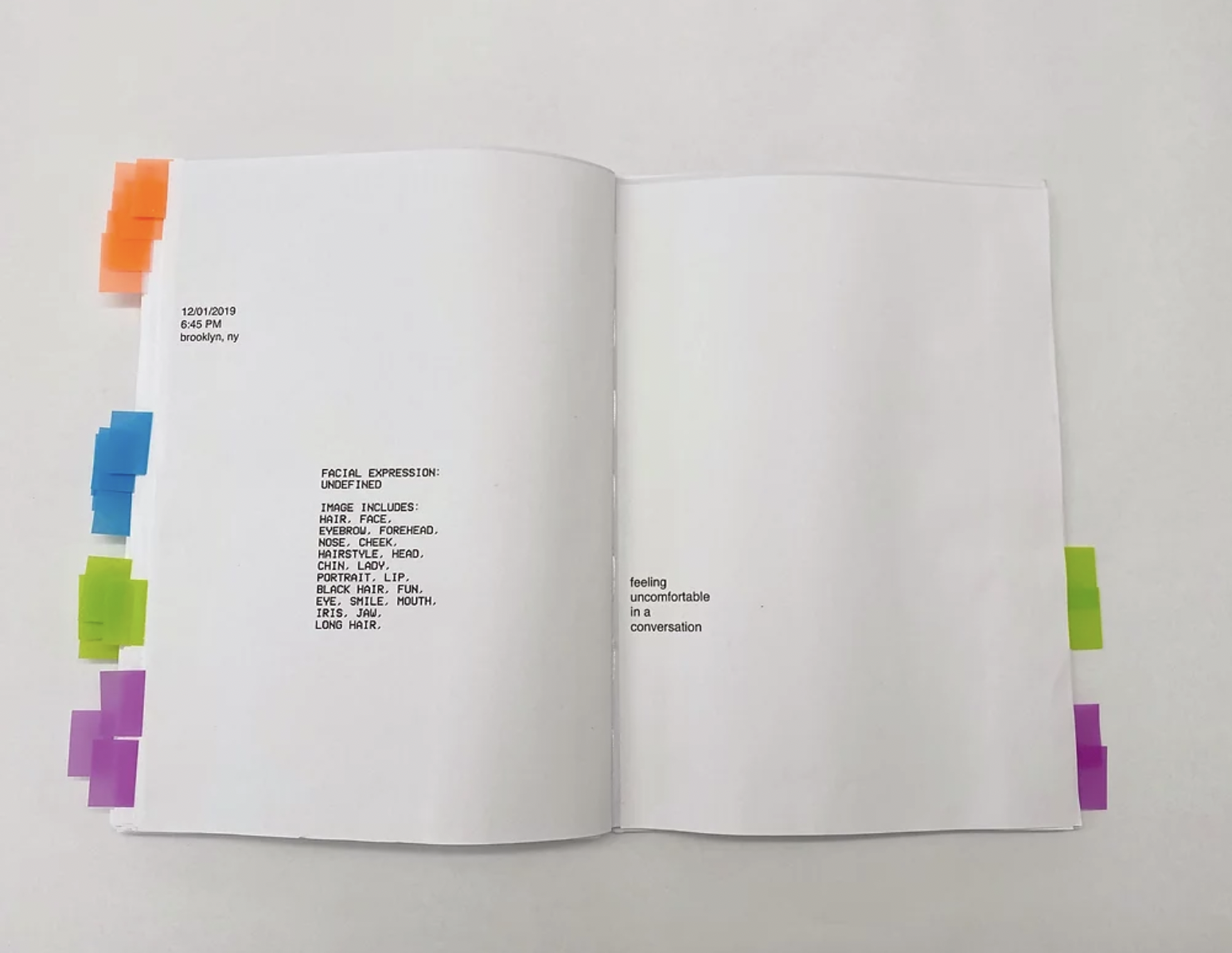

The flags on the side of each page correspond with one of the following labels Google's Vision thought my image had: sorrow, fun, racy, and smile. I selected these labels due to their high frequency and my curiosity about them. I opted to use these flags to serve as data visualization.

Google’s Vision is a set of machine learning models that analyze images for objects, facial expressions, and the type of content (if it is racy, adult, spoof, or medical).

I had Google’s Vision watch me from my laptop for a month. At random points during each day, I would trigger Google's Vision models to record what it “saw”. At the same time, I would write down what I was actually doing at that moment.

The flags on the side of each page correspond with one of the following labels Google's Vision thought my image had: sorrow, fun, racy, and smile. I selected these labels due to their high frequency and my curiosity about them. I opted to use these flags to serve as data visualization.

Process

When creating this work, it was important that I made all the data I had just created accessible. This was to my knowledge the first time someone had been really present with their perpetual shadow, ML algorithms, and the insights this piece offered shouldn’t be only accessible to those with great tech literacy.

After extensive research, I found that I wanted this format to uphold the following constraints:

- offer an intimate one-on-one experience

- push away from the reliance on digital mediums

- not be layered with cliches about surveillance

- show main points that I want to highlight for educational purposes while maintaining the "art" quality

Bringing it to life

In order to make this piece, I began by finding blank books on Amazon to purchase.

I then used Figma to decide the layout of every page. I tested multiple variations of the layout and design with 6 people until I reached the current design.

Finally, I added the tags. The color association was basically random since I did not want there to be a clear notion on how the colors were selected for each label.